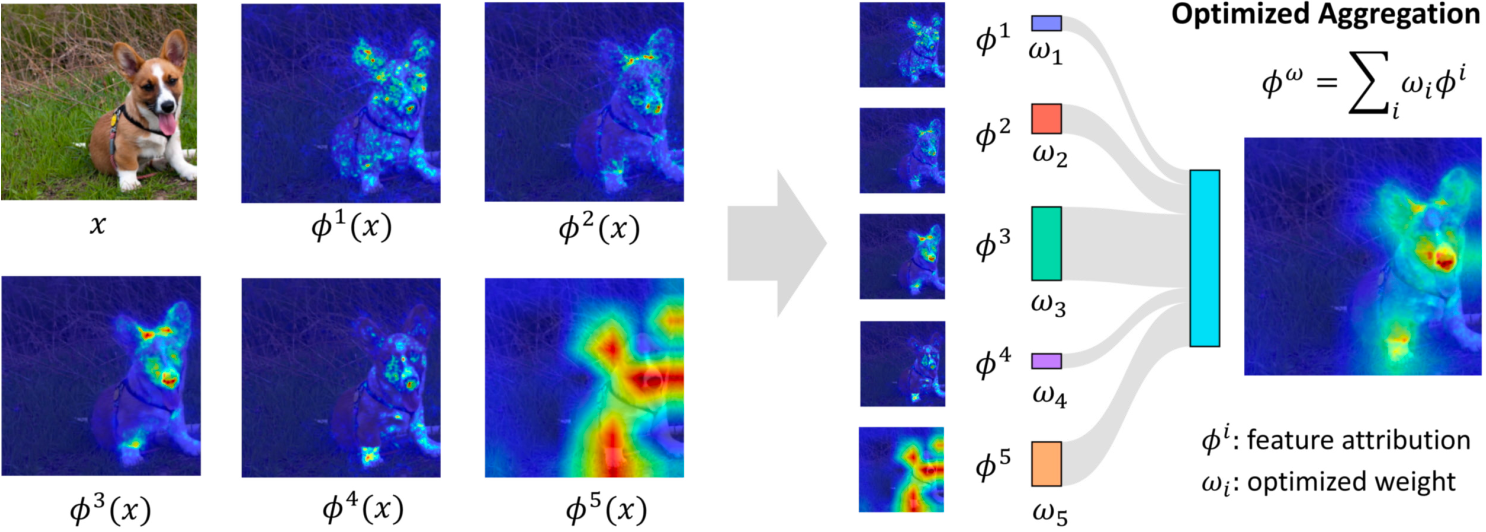

This paper observes that existing individual methods often produce inconsistent and unstable results, therefore, reducing the reliability of explanations. To address this issue, they combine multiple explanations across distinct methods to improve the quality of feature attribution.

The aggregated explanation is defined as follows:

$$ \phi^{w} = \sum_{i} w_i \phi^i $$where $w_i$ represents the weight of the individual explanation $\phi^i$. To find the global optim of $\phi^w$, they propose a new concept: generalized L2 metrics for explanations.

If a metric of the aggregated explanation meets the definition of the generalized L2 metric, there exists a global optimum for $\phi^w$ that can be found through convex optimization.

It is worth noting that their methods are inspired by ensemble neural networks. Their proof also references ensemble neural networks.

References

ICML 2024 Provably Better Explanations with Optimized Aggregation of Feature Attributions